Thursday, May 24, 2018

Kubernetes Containerd Integration Goes GA

Kubernetes Containerd Integration Goes GA

Authors: Lantao Liu, Software Engineer, Google and Mike Brown, Open Source Developer Advocate, IBM

In a previous blog - Containerd Brings More Container Runtime Options for Kubernetes, we introduced the alpha version of the Kubernetes containerd integration. With another 6 months of development, the integration with containerd is now generally available! You can now use containerd 1.1 as the container runtime for production Kubernetes clusters!

Containerd 1.1 works with Kubernetes 1.10 and above, and supports all Kubernetes features. The test coverage of containerd integration on Google Cloud Platform in Kubernetes test infrastructure is now equivalent to the Docker integration (See: test dashboard).

We’re very glad to see containerd rapidly grow to this big milestone. Alibaba Cloud started to use containerd actively since its first day, and thanks to the simplicity and robustness emphasise, make it a perfect container engine running in our Serverless Kubernetes product, which has high qualification on performance and stability. No doubt, containerd will be a core engine of container era, and continue to driving innovation forward.

— Xinwei, Staff Engineer in Alibaba Cloud

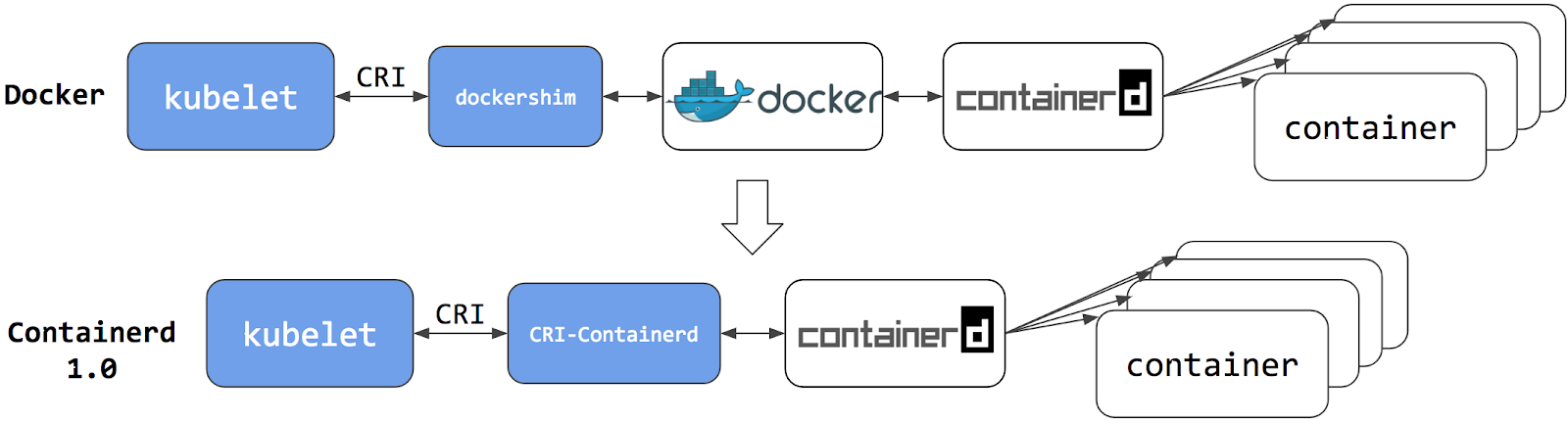

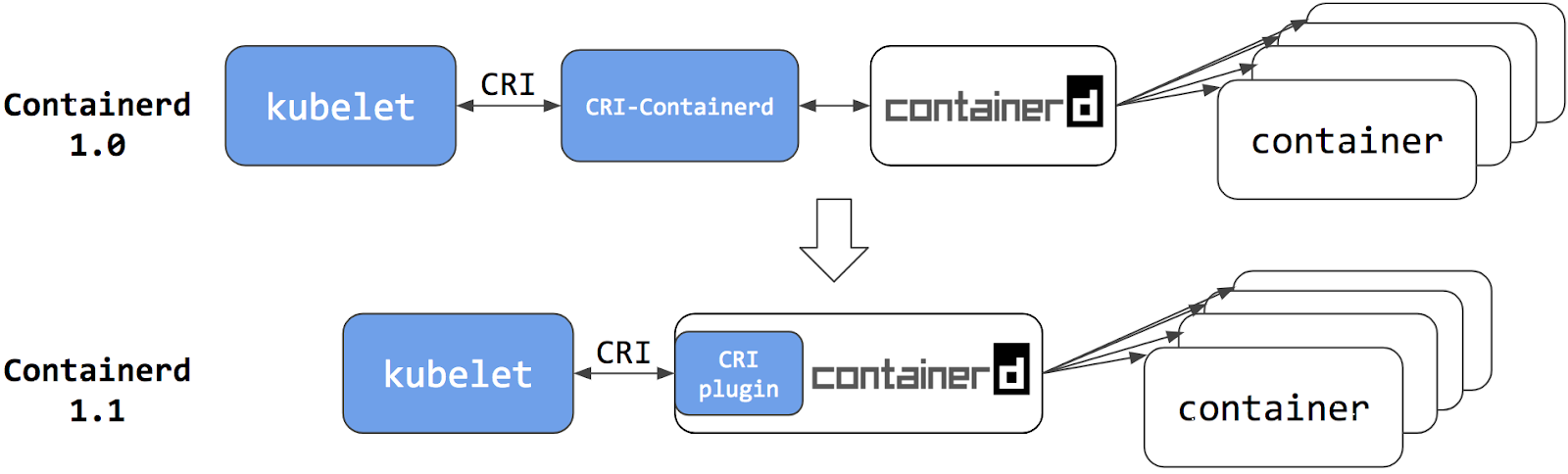

Architecture Improvements

The Kubernetes containerd integration architecture has evolved twice. Each evolution has made the stack more stable and efficient.

Containerd 1.0 - CRI-Containerd (end of life)

For containerd 1.0, a daemon called cri-containerd was required to operate between Kubelet and containerd. Cri-containerd handled the Container Runtime Interface (CRI) service requests from Kubelet and used containerd to manage containers and container images correspondingly. Compared to the Docker CRI implementation (dockershim), this eliminated one extra hop in the stack.

However, cri-containerd and containerd 1.0 were still 2 different daemons which interacted via grpc. The extra daemon in the loop made it more complex for users to understand and deploy, and introduced unnecessary communication overhead.

Containerd 1.1 - CRI Plugin (current)

In containerd 1.1, the cri-containerd daemon is now refactored to be a containerd CRI plugin. The CRI plugin is built into containerd 1.1, and enabled by default. Unlike cri-containerd, the CRI plugin interacts with containerd through direct function calls. This new architecture makes the integration more stable and efficient, and eliminates another grpc hop in the stack. Users can now use Kubernetes with containerd 1.1 directly. The cri-containerd daemon is no longer needed.

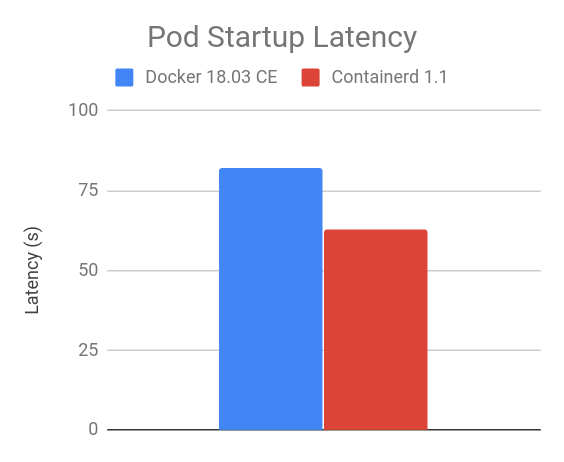

Performance

Improving performance was one of the major focus items for the containerd 1.1 release. Performance was optimized in terms of pod startup latency and daemon resource usage.

The following results are a comparison between containerd 1.1 and Docker 18.03 CE. The containerd 1.1 integration uses the CRI plugin built into containerd; and the Docker 18.03 CE integration uses the dockershim.

The results were generated using the Kubernetes node performance benchmark, which is part of Kubernetes node e2e test. Most of the containerd benchmark data is publicly accessible on the node performance dashboard.

Pod Startup Latency

The “105 pod batch startup benchmark” results show that the containerd 1.1 integration has lower pod startup latency than Docker 18.03 CE integration with dockershim (lower is better).

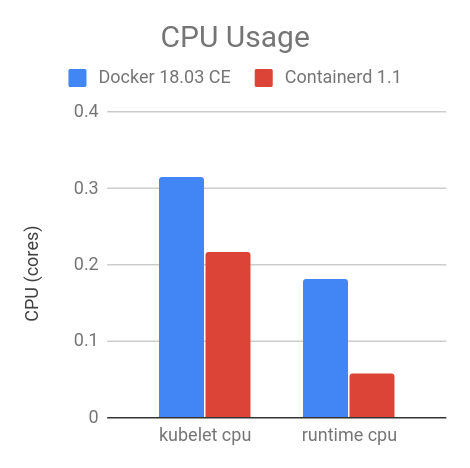

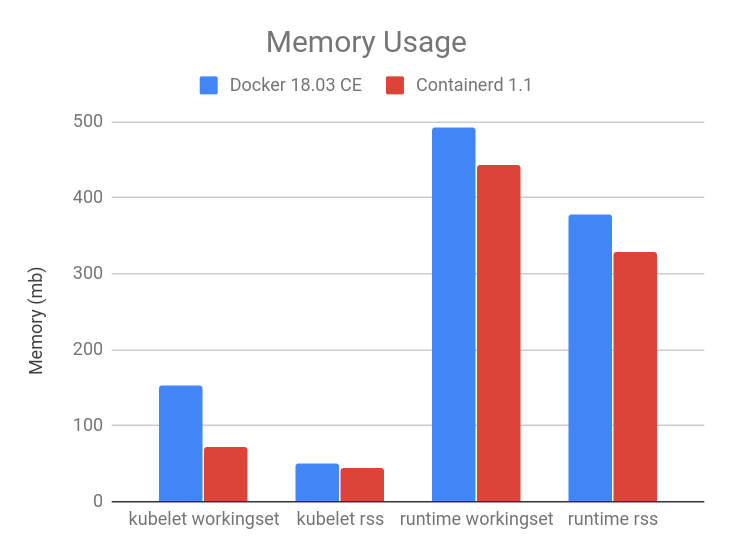

CPU and Memory

At the steady state, with 105 pods, the containerd 1.1 integration consumes less CPU and memory overall compared to Docker 18.03 CE integration with dockershim. The results vary with the number of pods running on the node, 105 is chosen because it is the current default for the maximum number of user pods per node.

As shown in the figures below, compared to Docker 18.03 CE integration with dockershim, the containerd 1.1 integration has 30.89% lower kubelet cpu usage, 68.13% lower container runtime cpu usage, 11.30% lower kubelet resident set size (RSS) memory usage, 12.78% lower container runtime RSS memory usage.

crictl

Container runtime command-line interface (CLI) is a useful tool for system and application troubleshooting. When using Docker as the container runtime for Kubernetes, system administrators sometimes login to the Kubernetes node to run Docker commands for collecting system and/or application information. For example, one may use docker ps and docker inspect to check application process status, docker images to list images on the node, and docker info to identify container runtime configuration, etc.

For containerd and all other CRI-compatible container runtimes, e.g. dockershim, we recommend using crictl as a replacement CLI over the Docker CLI for troubleshooting pods, containers, and container images on Kubernetes nodes.

crictl is a tool providing a similar experience to the Docker CLI for Kubernetes node troubleshooting and crictl works consistently across all CRI-compatible container runtimes. It is hosted in the kubernetes-incubator/cri-tools repository and the current version is v1.0.0-beta.1. crictl is designed to resemble the Docker CLI to offer a better transition experience for users, but it is not exactly the same. There are a few important differences, explained below.

Limited Scope - crictl is a Troubleshooting Tool

The scope of crictl is limited to troubleshooting, it is not a replacement to docker or kubectl. Docker’s CLI provides a rich set of commands, making it a very useful development tool. But it is not the best fit for troubleshooting on Kubernetes nodes. Some Docker commands are not useful to Kubernetes, such as docker network and docker build; and some may even break the system, such as docker rename. crictl provides just enough commands for node troubleshooting, which is arguably safer to use on production nodes.

Kubernetes Oriented

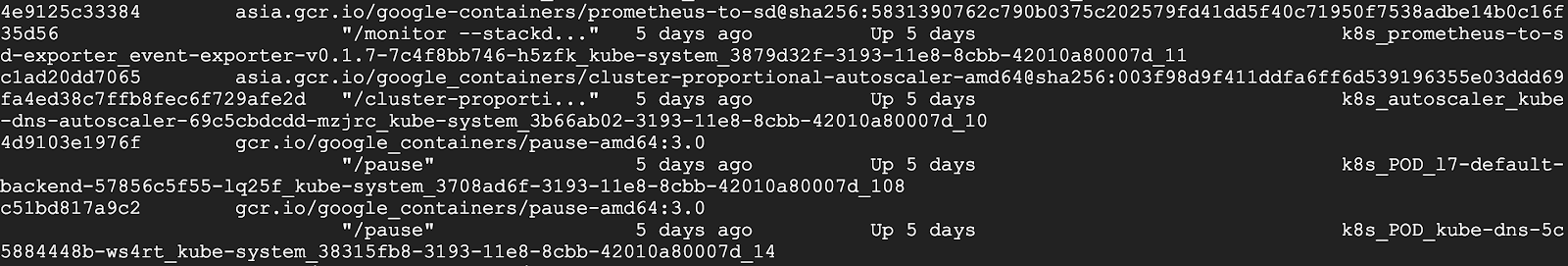

crictl offers a more kubernetes-friendly view of containers. Docker CLI lacks core Kubernetes concepts, e.g. pod and namespace, so it can’t provide a clear view of containers and pods. One example is that docker ps shows somewhat obscure, long Docker container names, and shows pause containers and application containers together:

However, pause containers are a pod implementation detail, where one pause container is used for each pod, and thus should not be shown when listing containers that are members of pods.

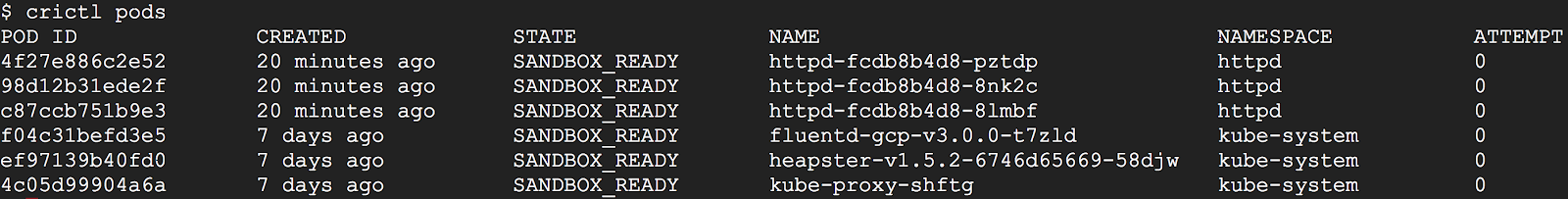

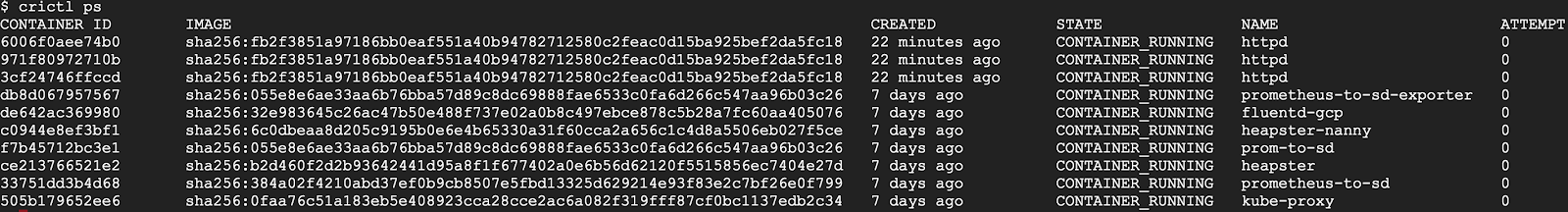

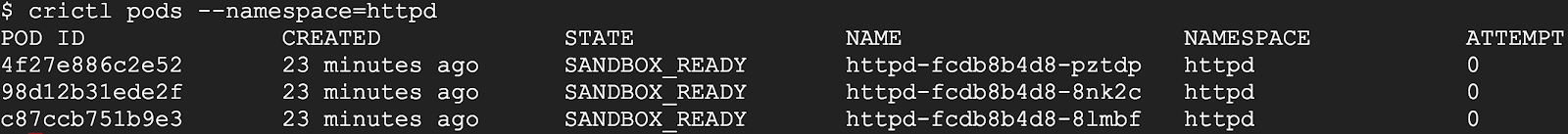

crictl, by contrast, is designed for Kubernetes. It has different sets of commands for pods and containers. For example, crictl pods lists pod information, and crictl ps only lists application container information. All information is well formatted into table columns.

As another example, crictl pods includes a –namespace option for filtering pods by the namespaces specified in Kubernetes.

For more details about how to use crictl with containerd:

What about Docker Engine?

“Does switching to containerd mean I can’t use Docker Engine anymore?” We hear this question a lot, the short answer is NO.

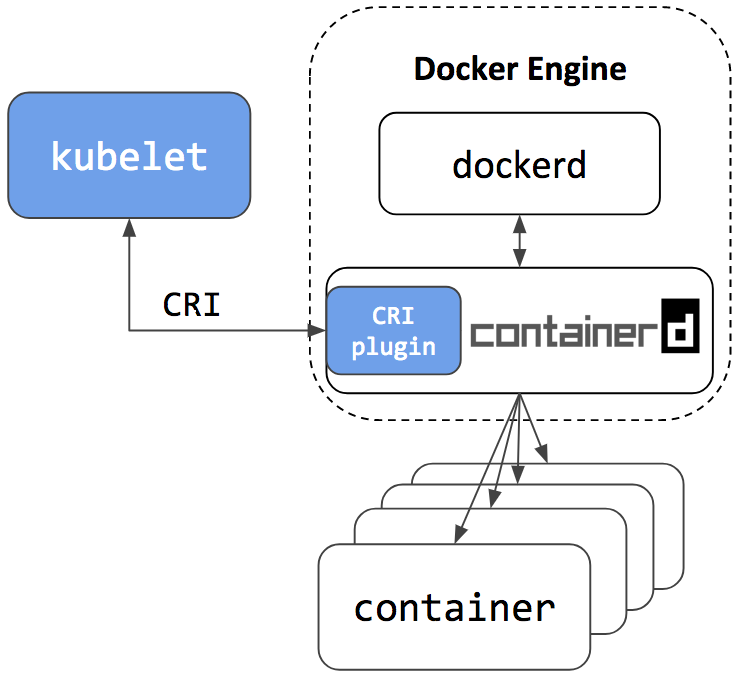

Docker Engine is built on top of containerd. The next release of Docker Community Edition (Docker CE) will use containerd version 1.1. Of course, it will have the CRI plugin built-in and enabled by default. This means users will have the option to continue using Docker Engine for other purposes typical for Docker users, while also being able to configure Kubernetes to use the underlying containerd that came with and is simultaneously being used by Docker Engine on the same node. See the architecture figure below showing the same containerd being used by Docker Engine and Kubelet:

Since containerd is being used by both Kubelet and Docker Engine, this means users who choose the containerd integration will not just get new Kubernetes features, performance, and stability improvements, they will also have the option of keeping Docker Engine around for other use cases.

A containerd namespace mechanism is employed to guarantee that Kubelet and Docker Engine won’t see or have access to containers and images created by each other. This makes sure they won’t interfere with each other. This also means that:

- Users won’t see Kubernetes created containers with the docker ps command. Please use crictl ps instead. And vice versa, users won’t see Docker CLI created containers in Kubernetes or with crictl ps command. The crictl create and crictl runp commands are only for troubleshooting. Manually starting pod or container with crictl on production nodes is not recommended.

- Users won’t see Kubernetes pulled images with the docker images command. Please use the crictl images command instead. And vice versa, Kubernetes won’t see images created by docker pull, docker load or docker build commands. Please use the crictl pull command instead, and ctr cri load if you have to load an image.

Summary

- Containerd 1.1 natively supports CRI. It can be used directly by Kubernetes.

- Containerd 1.1 is production ready.

- Containerd 1.1 has good performance in terms of pod startup latency and system resource utilization.

- crictl is the CLI tool to talk with containerd 1.1 and other CRI-conformant container runtimes for node troubleshooting.

- The next stable release of Docker CE will include containerd 1.1. Users have the option to continue using Docker for use cases not specific to Kubernetes, and configure Kubernetes to use the same underlying containerd that comes with Docker.

We’d like to thank all the contributors from Google, IBM, Docker, ZTE, ZJU and many other individuals who made this happen!

For a detailed list of changes in the containerd 1.1 release, please see the release notes here: https://github.com/containerd/containerd/releases/tag/v1.1.0

Try it out

To setup a Kubernetes cluster using containerd as the container runtime:

- For a production quality cluster on GCE brought up with kube-up.sh, see here.

- For a multi-node cluster installer and bring up steps using ansible and kubeadm, see here.

- For creating a cluster from scratch on Google Cloud, see Kubernetes the Hard Way.

- For a custom installation from release tarball, see here.

- To install using LinuxKit on a local VM, see here.

Contribute

The containerd CRI plugin is an open source github project within containerd https://github.com/containerd/cri. Any contributions in terms of ideas, issues, and/or fixes are welcome. The getting started guide for developers is a good place to start for contributors.

Community

The project is developed and maintained jointly by members of the Kubernetes SIG-Node community and the containerd community. We’d love to hear feedback from you. To join the communities:

- sig-node community site

- Slack:

- #sig-node channel in kubernetes.slack.com

- #containerd channel in https://dockr.ly/community

- Mailing List: https://groups.google.com/forum/#!forum/kubernetes-sig-node

- Introducing kustomize; Template-free Configuration Customization for Kubernetes May 29

- Getting to Know Kubevirt May 22

- Gardener - The Kubernetes Botanist May 17

- Docs are Migrating from Jekyll to Hugo May 5

- Announcing Kubeflow 0.1 May 4

- Current State of Policy in Kubernetes May 2

- Developing on Kubernetes May 1

- Zero-downtime Deployment in Kubernetes with Jenkins Apr 30

- Kubernetes Community - Top of the Open Source Charts in 2017 Apr 25

- Local Persistent Volumes for Kubernetes Goes Beta Apr 13

- Container Storage Interface (CSI) for Kubernetes Goes Beta Apr 10

- Fixing the Subpath Volume Vulnerability in Kubernetes Apr 4

- Principles of Container-based Application Design Mar 15

- Expanding User Support with Office Hours Mar 14

- How to Integrate RollingUpdate Strategy for TPR in Kubernetes Mar 13

- Apache Spark 2.3 with Native Kubernetes Support Mar 6

- Kubernetes: First Beta Version of Kubernetes 1.10 is Here Mar 2

- Reporting Errors from Control Plane to Applications Using Kubernetes Events Jan 25

- Core Workloads API GA Jan 15

- Introducing client-go version 6 Jan 12

- Extensible Admission is Beta Jan 11

- Introducing Container Storage Interface (CSI) Alpha for Kubernetes Jan 10

- Kubernetes v1.9 releases beta support for Windows Server Containers Jan 9

- Five Days of Kubernetes 1.9 Jan 8

- Introducing Kubeflow - A Composable, Portable, Scalable ML Stack Built for Kubernetes Dec 21

- Kubernetes 1.9: Apps Workloads GA and Expanded Ecosystem Dec 15

- Using eBPF in Kubernetes Dec 7

- PaddlePaddle Fluid: Elastic Deep Learning on Kubernetes Dec 6

- Autoscaling in Kubernetes Nov 17

- Certified Kubernetes Conformance Program: Launch Celebration Round Up Nov 16

- Kubernetes is Still Hard (for Developers) Nov 15

- Securing Software Supply Chain with Grafeas Nov 3

- Containerd Brings More Container Runtime Options for Kubernetes Nov 2

- Kubernetes the Easy Way Nov 1

- Enforcing Network Policies in Kubernetes Oct 30

- Using RBAC, Generally Available in Kubernetes v1.8 Oct 28

- It Takes a Village to Raise a Kubernetes Oct 26

- kubeadm v1.8 Released: Introducing Easy Upgrades for Kubernetes Clusters Oct 25

- Five Days of Kubernetes 1.8 Oct 24

- Introducing Software Certification for Kubernetes Oct 19

- Request Routing and Policy Management with the Istio Service Mesh Oct 10

- Kubernetes Community Steering Committee Election Results Oct 5

- Kubernetes 1.8: Security, Workloads and Feature Depth Sep 29

- Kubernetes StatefulSets & DaemonSets Updates Sep 27

- Introducing the Resource Management Working Group Sep 21

- Windows Networking at Parity with Linux for Kubernetes Sep 8

- Kubernetes Meets High-Performance Computing Aug 22

- High Performance Networking with EC2 Virtual Private Clouds Aug 11

- Kompose Helps Developers Move Docker Compose Files to Kubernetes Aug 10

- Happy Second Birthday: A Kubernetes Retrospective Jul 28

- How Watson Health Cloud Deploys Applications with Kubernetes Jul 14

- Kubernetes 1.7: Security Hardening, Stateful Application Updates and Extensibility Jun 30

- Draft: Kubernetes container development made easy May 31

- Managing microservices with the Istio service mesh May 31

- Kubespray Ansible Playbooks foster Collaborative Kubernetes Ops May 19

- Kubernetes: a monitoring guide May 19

- Dancing at the Lip of a Volcano: The Kubernetes Security Process - Explained May 18

- How Bitmovin is Doing Multi-Stage Canary Deployments with Kubernetes in the Cloud and On-Prem Apr 21

- RBAC Support in Kubernetes Apr 6

- Configuring Private DNS Zones and Upstream Nameservers in Kubernetes Apr 4

- Advanced Scheduling in Kubernetes Mar 31

- Scalability updates in Kubernetes 1.6: 5,000 node and 150,000 pod clusters Mar 30

- Five Days of Kubernetes 1.6 Mar 29

- Dynamic Provisioning and Storage Classes in Kubernetes Mar 29

- Kubernetes 1.6: Multi-user, Multi-workloads at Scale Mar 28

- The K8sPort: Engaging Kubernetes Community One Activity at a Time Mar 24

- Deploying PostgreSQL Clusters using StatefulSets Feb 24

- Containers as a Service, the foundation for next generation PaaS Feb 21

- Inside JD.com's Shift to Kubernetes from OpenStack Feb 10

- Run Deep Learning with PaddlePaddle on Kubernetes Feb 8

- Highly Available Kubernetes Clusters Feb 2

- Running MongoDB on Kubernetes with StatefulSets Jan 30

- Fission: Serverless Functions as a Service for Kubernetes Jan 30

- How we run Kubernetes in Kubernetes aka Kubeception Jan 20

- Scaling Kubernetes deployments with Policy-Based Networking Jan 19

- A Stronger Foundation for Creating and Managing Kubernetes Clusters Jan 12

- Kubernetes UX Survey Infographic Jan 9

- Kubernetes supports OpenAPI Dec 23

- Cluster Federation in Kubernetes 1.5 Dec 22

- Windows Server Support Comes to Kubernetes Dec 21

- StatefulSet: Run and Scale Stateful Applications Easily in Kubernetes Dec 20

- Introducing Container Runtime Interface (CRI) in Kubernetes Dec 19

- Five Days of Kubernetes 1.5 Dec 19

- Kubernetes 1.5: Supporting Production Workloads Dec 13

- From Network Policies to Security Policies Dec 8

- Kompose: a tool to go from Docker-compose to Kubernetes Nov 22

- Kubernetes Containers Logging and Monitoring with Sematext Nov 18

- Visualize Kubelet Performance with Node Dashboard Nov 17

- CNCF Partners With The Linux Foundation To Launch New Kubernetes Certification, Training and Managed Service Provider Program Nov 8

- Modernizing the Skytap Cloud Micro-Service Architecture with Kubernetes Nov 7

- Bringing Kubernetes Support to Azure Container Service Nov 7

- Tail Kubernetes with Stern Oct 31

- Introducing Kubernetes Service Partners program and a redesigned Partners page Oct 31

- How We Architected and Run Kubernetes on OpenStack at Scale at Yahoo! JAPAN Oct 24

- Building Globally Distributed Services using Kubernetes Cluster Federation Oct 14

- Helm Charts: making it simple to package and deploy common applications on Kubernetes Oct 10

- Dynamic Provisioning and Storage Classes in Kubernetes Oct 7

- How we improved Kubernetes Dashboard UI in 1.4 for your production needs Oct 3

- How we made Kubernetes insanely easy to install Sep 28

- How Qbox Saved 50% per Month on AWS Bills Using Kubernetes and Supergiant Sep 27

- Kubernetes 1.4: Making it easy to run on Kubernetes anywhere Sep 26

- High performance network policies in Kubernetes clusters Sep 21

- Creating a PostgreSQL Cluster using Helm Sep 9

- Deploying to Multiple Kubernetes Clusters with kit Sep 6

- Cloud Native Application Interfaces Sep 1

- Security Best Practices for Kubernetes Deployment Aug 31

- Scaling Stateful Applications using Kubernetes Pet Sets and FlexVolumes with Datera Elastic Data Fabric Aug 29

- SIG Apps: build apps for and operate them in Kubernetes Aug 16

- Kubernetes Namespaces: use cases and insights Aug 16

- Create a Couchbase cluster using Kubernetes Aug 15

- Challenges of a Remotely Managed, On-Premises, Bare-Metal Kubernetes Cluster Aug 2

- Why OpenStack's embrace of Kubernetes is great for both communities Jul 26

- The Bet on Kubernetes, a Red Hat Perspective Jul 21

- Happy Birthday Kubernetes. Oh, the places you’ll go! Jul 21

- A Very Happy Birthday Kubernetes Jul 21

- Bringing End-to-End Kubernetes Testing to Azure (Part 2) Jul 18

- Steering an Automation Platform at Wercker with Kubernetes Jul 15

- Dashboard - Full Featured Web Interface for Kubernetes Jul 15

- Cross Cluster Services - Achieving Higher Availability for your Kubernetes Applications Jul 14

- Citrix + Kubernetes = A Home Run Jul 14

- Thousand Instances of Cassandra using Kubernetes Pet Set Jul 13

- Stateful Applications in Containers!? Kubernetes 1.3 Says “Yes!” Jul 13

- Kubernetes in Rancher: the further evolution Jul 12

- Autoscaling in Kubernetes Jul 12

- rktnetes brings rkt container engine to Kubernetes Jul 11

- Minikube: easily run Kubernetes locally Jul 11

- Five Days of Kubernetes 1.3 Jul 11

- Updates to Performance and Scalability in Kubernetes 1.3 -- 2,000 node 60,000 pod clusters Jul 7

- Kubernetes 1.3: Bridging Cloud Native and Enterprise Workloads Jul 6

- Container Design Patterns Jun 21

- The Illustrated Children's Guide to Kubernetes Jun 9

- Bringing End-to-End Kubernetes Testing to Azure (Part 1) Jun 6

- Hypernetes: Bringing Security and Multi-tenancy to Kubernetes May 24

- CoreOS Fest 2016: CoreOS and Kubernetes Community meet in Berlin (& San Francisco) May 3

- Introducing the Kubernetes OpenStack Special Interest Group Apr 22

- SIG-UI: the place for building awesome user interfaces for Kubernetes Apr 20

- SIG-ClusterOps: Promote operability and interoperability of Kubernetes clusters Apr 19

- SIG-Networking: Kubernetes Network Policy APIs Coming in 1.3 Apr 18

- How to deploy secure, auditable, and reproducible Kubernetes clusters on AWS Apr 15

- Container survey results - March 2016 Apr 8

- Adding Support for Kubernetes in Rancher Apr 8

- Configuration management with Containers Apr 4

- Using Deployment objects with Kubernetes 1.2 Apr 1

- Kubernetes 1.2 and simplifying advanced networking with Ingress Mar 31

- Using Spark and Zeppelin to process big data on Kubernetes 1.2 Mar 30

- Building highly available applications using Kubernetes new multi-zone clusters (a.k.a. 'Ubernetes Lite') Mar 29

- AppFormix: Helping Enterprises Operationalize Kubernetes Mar 29

- How container metadata changes your point of view Mar 28

- Five Days of Kubernetes 1.2 Mar 28

- 1000 nodes and beyond: updates to Kubernetes performance and scalability in 1.2 Mar 28

- Scaling neural network image classification using Kubernetes with TensorFlow Serving Mar 23

- Kubernetes 1.2: Even more performance upgrades, plus easier application deployment and management Mar 17

- Kubernetes in the Enterprise with Fujitsu’s Cloud Load Control Mar 11

- ElasticBox introduces ElasticKube to help manage Kubernetes within the enterprise Mar 11

- State of the Container World, February 2016 Mar 1

- Kubernetes Community Meeting Notes - 20160225 Mar 1

- KubeCon EU 2016: Kubernetes Community in London Feb 24

- Kubernetes Community Meeting Notes - 20160218 Feb 23

- Kubernetes Community Meeting Notes - 20160211 Feb 16

- ShareThis: Kubernetes In Production Feb 11

- Kubernetes Community Meeting Notes - 20160204 Feb 9

- Kubernetes Community Meeting Notes - 20160128 Feb 2

- State of the Container World, January 2016 Feb 1

- Kubernetes Community Meeting Notes - 20160121 Jan 28

- Kubernetes Community Meeting Notes - 20160114 Jan 28

- Why Kubernetes doesn’t use libnetwork Jan 14

- Simple leader election with Kubernetes and Docker Jan 11

- Creating a Raspberry Pi cluster running Kubernetes, the installation (Part 2) Dec 22

- Managing Kubernetes Pods, Services and Replication Controllers with Puppet Dec 17

- How Weave built a multi-deployment solution for Scope using Kubernetes Dec 12

- Creating a Raspberry Pi cluster running Kubernetes, the shopping list (Part 1) Nov 25

- Monitoring Kubernetes with Sysdig Nov 19

- One million requests per second: Dependable and dynamic distributed systems at scale Nov 11

- Kubernetes 1.1 Performance upgrades, improved tooling and a growing community Nov 9

- Kubernetes as Foundation for Cloud Native PaaS Nov 3

- Some things you didn’t know about kubectl Oct 28

- Kubernetes Performance Measurements and Roadmap Sep 10

- Using Kubernetes Namespaces to Manage Environments Aug 28

- Weekly Kubernetes Community Hangout Notes - July 31 2015 Aug 4

- The Growing Kubernetes Ecosystem Jul 24

- Weekly Kubernetes Community Hangout Notes - July 17 2015 Jul 23

- Strong, Simple SSL for Kubernetes Services Jul 14

- Weekly Kubernetes Community Hangout Notes - July 10 2015 Jul 13

- Announcing the First Kubernetes Enterprise Training Course Jul 8

- Kubernetes 1.0 Launch Event at OSCON Jul 2

- How did the Quake demo from DockerCon Work? Jul 2

- The Distributed System ToolKit: Patterns for Composite Containers Jun 29

- Slides: Cluster Management with Kubernetes, talk given at the University of Edinburgh Jun 26

- Cluster Level Logging with Kubernetes Jun 11

- Weekly Kubernetes Community Hangout Notes - May 22 2015 Jun 2

- Kubernetes on OpenStack May 19

- Weekly Kubernetes Community Hangout Notes - May 15 2015 May 18

- Docker and Kubernetes and AppC May 18

- Kubernetes Release: 0.17.0 May 15

- Resource Usage Monitoring in Kubernetes May 12

- Weekly Kubernetes Community Hangout Notes - May 1 2015 May 11

- Kubernetes Release: 0.16.0 May 11

- AppC Support for Kubernetes through RKT May 4

- Weekly Kubernetes Community Hangout Notes - April 24 2015 Apr 30

- Borg: The Predecessor to Kubernetes Apr 23

- Kubernetes and the Mesosphere DCOS Apr 22

- Weekly Kubernetes Community Hangout Notes - April 17 2015 Apr 17

- Kubernetes Release: 0.15.0 Apr 16

- Introducing Kubernetes API Version v1beta3 Apr 16

- Weekly Kubernetes Community Hangout Notes - April 10 2015 Apr 11

- Faster than a speeding Latte Apr 6

- Weekly Kubernetes Community Hangout Notes - April 3 2015 Apr 4

- Paricipate in a Kubernetes User Experience Study Mar 31

- Weekly Kubernetes Community Hangout Notes - March 27 2015 Mar 28

- Kubernetes Gathering Videos Mar 23

- Welcome to the Kubernetes Blog! Mar 20